>Brain Composer

- Status:Work and Progress

- Date:June, 2019

- Category:Creative Projects

- Tags:Brain Interface, AI-generated Music, Machine Interface, Collaborative Project

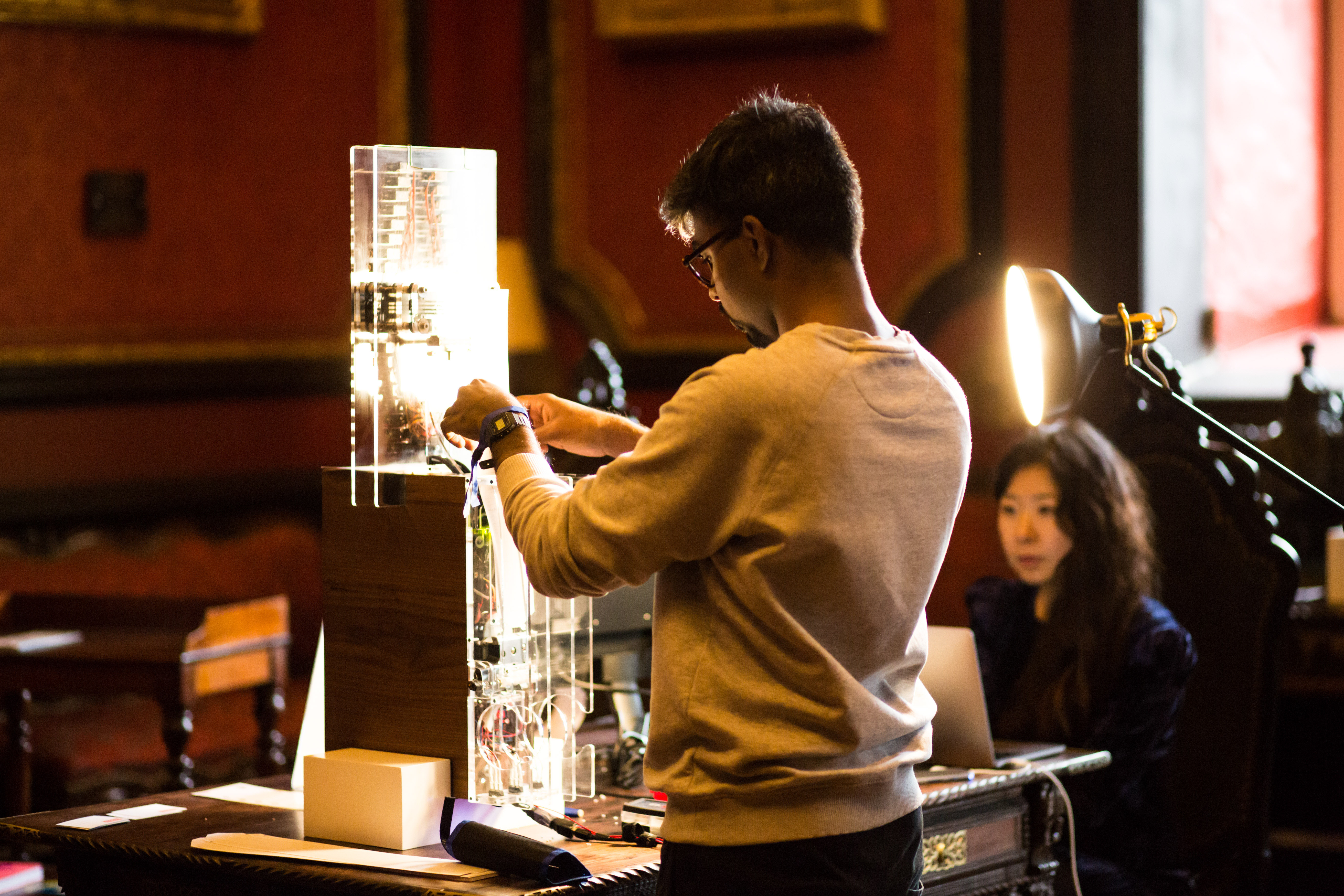

This project exlores possibility of the brain interface in musical application. The short musical melody extracted from human brain signal triggers AI-Engine that has been trained to produce music in the sytle of Bach (Music Style Transfer). The generated music then transmit the signal to the punching machine that produces 30 note music strip. Please visit Work in Progress page for more detailed development process. ** Most of the visual materials are from INDEED INNOVATION's blog post **

Team

As a creative technologist, I actively led the project from introducing the initial concept to the actual HW/SW development. Specifically, I wrote the algorithms to read brain signals and interpret them into musical notes (Javascript), and music generation part (Python)

Experience Process

We have built an experience that harnesses the power of thought, using algorithms to create music from the streams of thought, making it tangible and audible. Here are the details summarized:

Step 1. Read Brain Signal - As you concentrate, we measure the electrical activity of your cerebral cortex. Each electro-chemical discharge, each signal of your nerve cells produces an individual vibration profile of beta, alpha, theta and delta.

Step 2. Music Generation - Based on your vibrational profile, our creative code creates a unique piece of music: Inspired by Bach's works - but unique as you are - your melody immediately becomes part of our digital music library.

Step 3. Punch Music - The puncher makes your music audible and tangible outside of the digital. Thanks to the 3D printer, ingenuity and engineering, the puncher is virtually a polaroid of your mental vibration profile.- AI will analyze your input and drive INDEED’s generative design software to create a beautiful digital distortion of your portrait aligned with your sentiment.

Step 4. - As individual as each of your thoughts is your melody. Digital as well as analog. Listen to the songs of the other thinkers or play your own tune anytime from here.

Interface

The 8 indicates the 8 brain signal with different frequency range that can be extracted from EEG sensor. When each brain signal rises beyond given threashold value, it hits the hidden web piano in the background (Nerosky Documentation). The melody is transcribed for 5-6 seconds and later sent to the AI-background engine to generate polyphonic melody with transcrived melody as an input.

AI-generated music embracing brain melodies

The pretrained AI engine (Polyphonic RNN from MAGENTA) reproduces the style of Sebastian Bach having human-generated brain melodies as a base melody line. Usually human generated brain melody generates extremely digital (sounds unharmonic) sound. As a result, the generated phrases that sounds very familiar to us (00:00 - 00:08, 00:26 - 00:30 is where AI wins over human generated brain signal. (In these cases, the intensity of the brain signal either too low or only produced single note.) Throughout the music, there are some parts that proceed with unexpected melody composition, and it is the part where human analog data started to function.

Media Contents

A lot media contents are from INDEED's official photos, and more information can be found from our landing page (Please note that the content is in German)

Exhibition

2019. June - Digital Kindergarden, Hamburg, Germany

2019. Nov - House of Beautiful Business, Lisbon, Portugal